Tag: ubiquiti

-

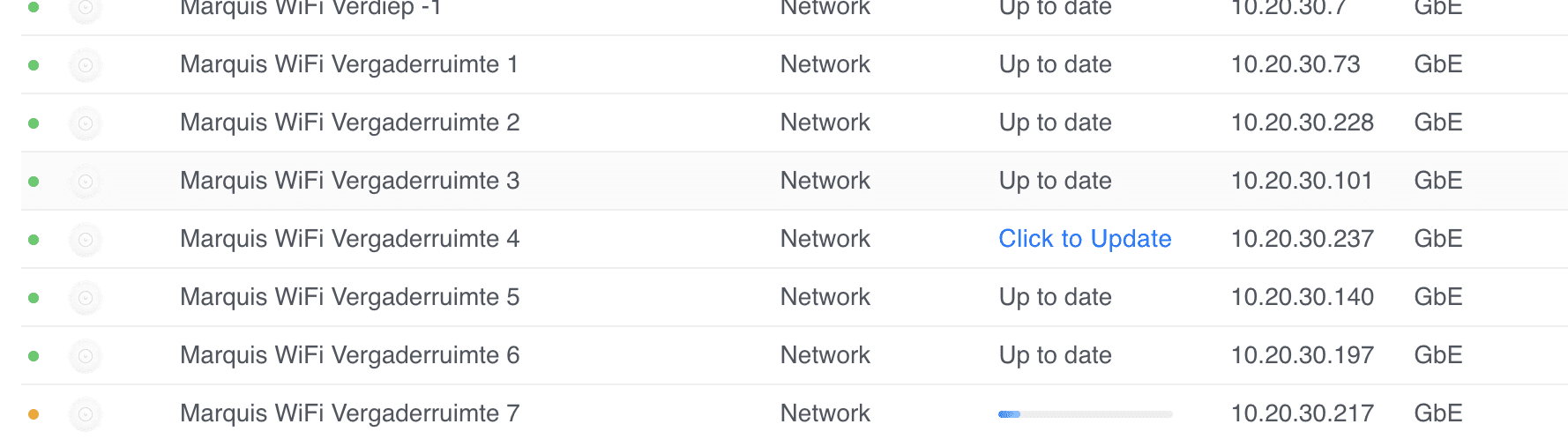

Unifi u6+ failing to upgrade

I have quite a few sites where some Unifi U6+ Access Points fail to upgrade with a generic update failed message. Marquis WiFi Vergaderruimte 4 update failed. I’ve tried everything, from ssh‘ing, factory resetting with set-default, to manually upgrading with upgrade, etc. Nothing worked. I thought I had a bunch of bad APs (and many…

-

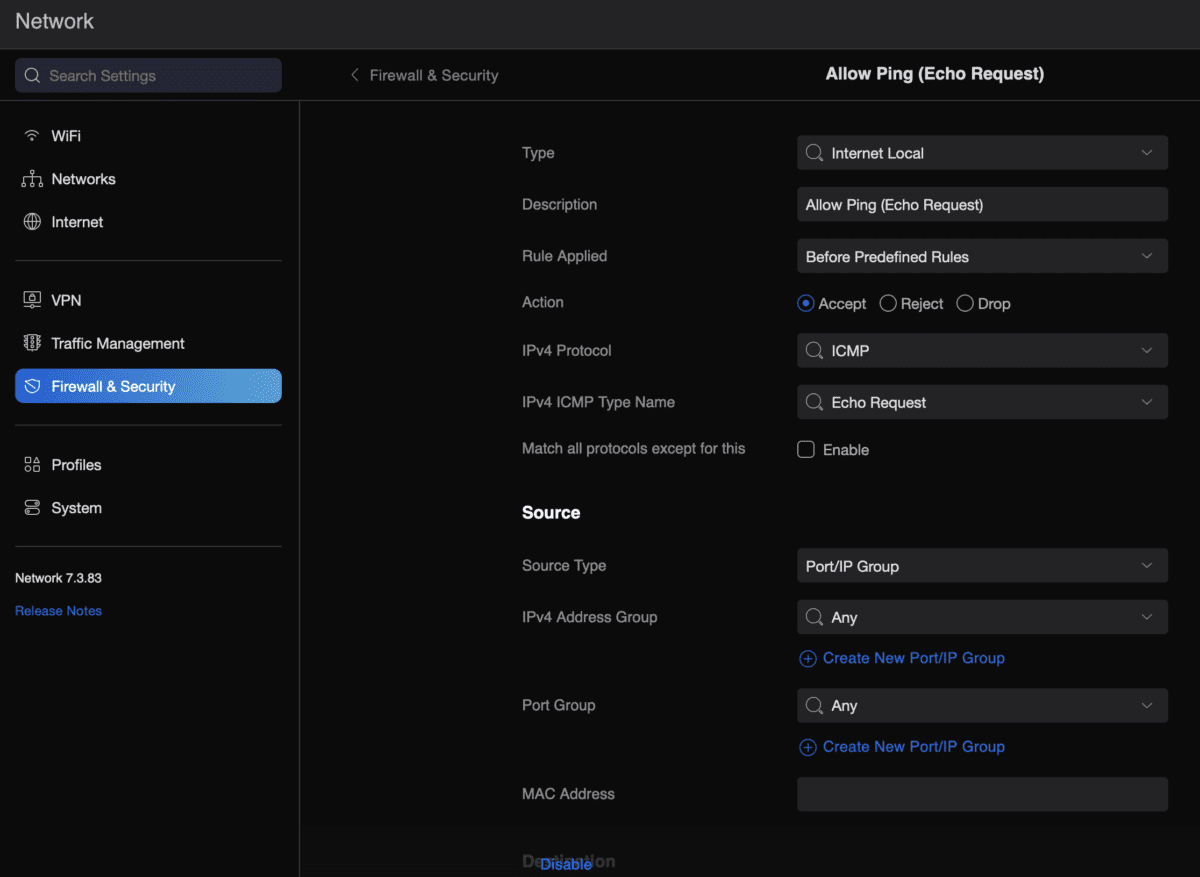

Allow ping from USG

Because I keep forgetting and it takes me far too much time to go through one of my million sites where I set this up and find the right config… To allow a USG (Unifi Security Gateway) to reply to external (WAN) ping requests, do the following: That’s it… All this for Smokeping.

-

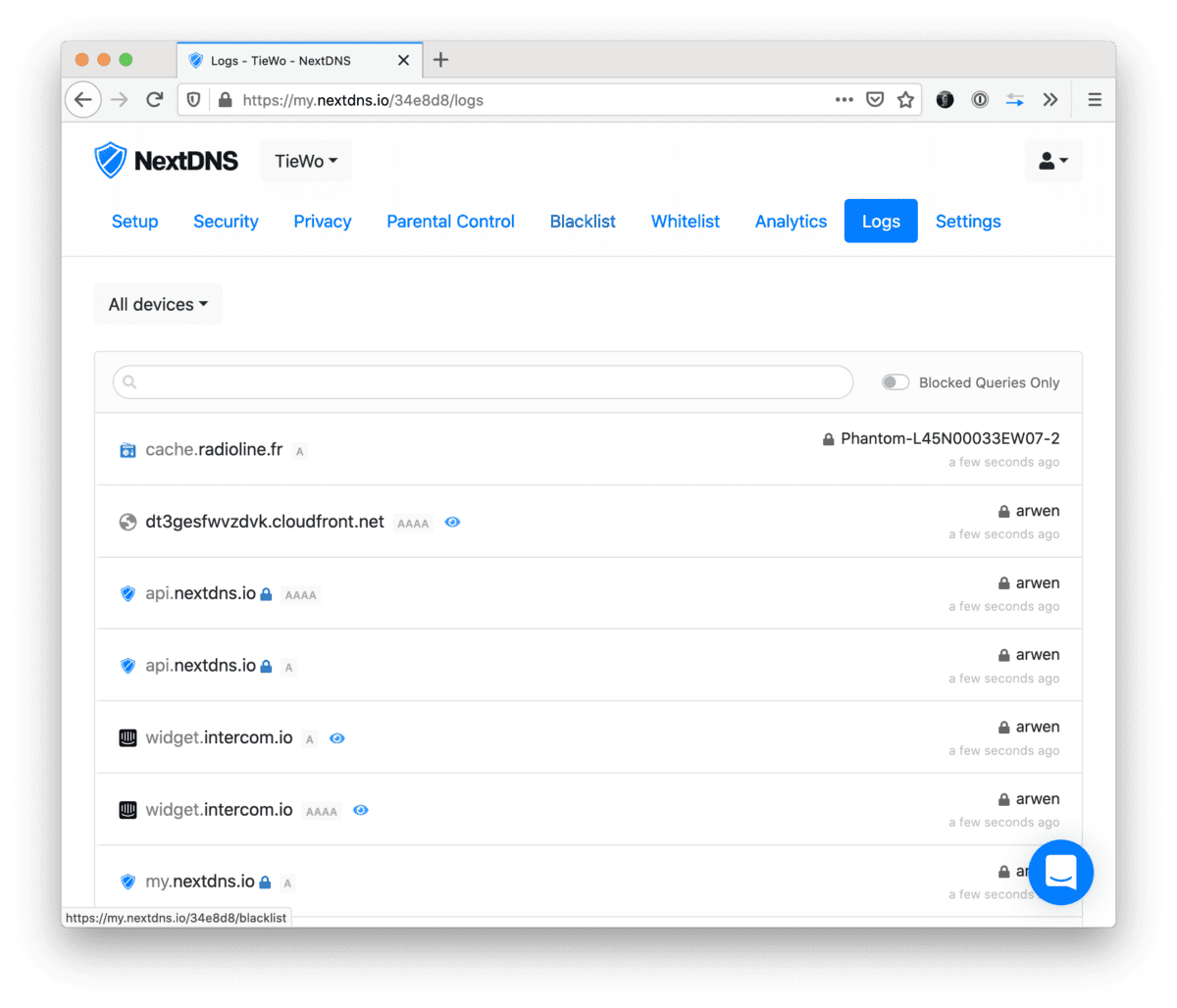

NextDNS, EdgeOS and device names

Noticed that NextDNS was reporting old hostnames in the logs. For example old device names (devices that changed hostnames), devices that were definitely no longer on the network, or IPs that were matched to the wrong hostnames. The culprit is how EdgeOS deals with its hosts file. Basically it just keeps all the old hosts…

-

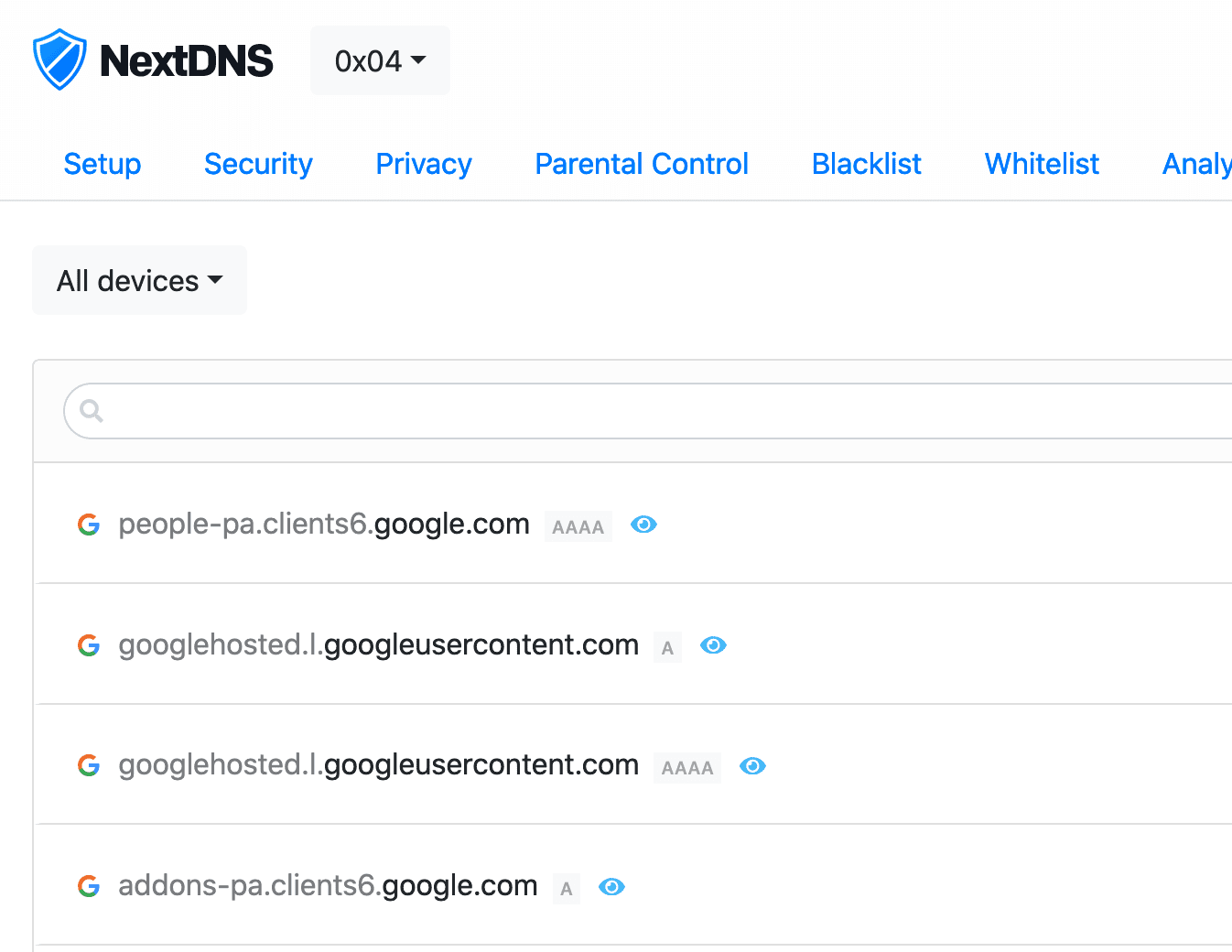

NextDNS + EdgeRouter + Redirecting DNS requests

Realised I haven’t updated this in a long while (life happened). Couple of weeks ago I started to play with NextDNS — and I really recommend anyone that’s something privacy minded and cares about the stuff happening on their network. I’ve set up several configs (home, parents, FlatTurtle TurtleBox (the NUCs controlling the screens)) and…

-

Edgerouter IPsec tunnel to Fritzbox

So, I have an EdgeRouter Lite in Singapore (Starhub) and a FritzBox in Belgium (EDPnet). This is mostly stuff that I have found from several articles, mostly from here. ERL: eth0 is WAN, eth1 (10.60.111.0/24) and eth2 (unused, not VPN’ed) are LAN FritzBoz: 192.168.1.0/24 This is the FritzBox config (go to VPN and them Import…

-

FlatTurtle in elevators: making of

First tests at Glaverbel (circle or “O” shaped building) in Watermael-Boisfort with 12 lifts (about a year ago). Internet wiring makes a whole circle from the internet connection at the technical room (near entrance hall). In this design from the 1960s the lift machine rooms had one shared/common room where we installed switches (to avoid…

-

Turtle shaped WiFi

demolished a unifi from Auki and build a 3D printed Turtle around it. Came out very nicely, and it’s quite solid. 3D renders: Actual printed design: Opened up unifi: Design by Seendesign. More at FlatTurtle’s blog.

-

Outdoor WiFi (120onCortenbergh)

About a year later… Except not being white anymore, it still looks good. Outdoor unifi (previous model) connected to Auki. Picture enhanced by Google Plus to add dramatic effect. 😉 Original picture here.

-

Outdoor WiFi (Pegasus Park)

Point to Point transmitters (Loco M2) Point to Point receiver (Loco M2) Boxes with power, PoE and switches Outdoor Access Point (UAP Outdoor+)